Research Areas

Data-driven Predictive Control of Uncertain Systems

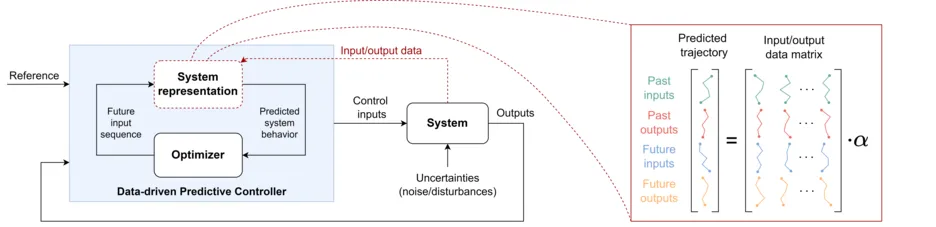

For safe control of dynamical systems, one must consider various critical factors in controller design, e.g., constraints on system variables due to physical limitations, environmental uncertainties like process disturbances, and inexact system knowledge due to modeling inaccuracies. Instead of physical modeling, which can be cumbersome for complex systems, data can be used to derive system representations for controller design.

In this field of research, we develop data-driven predictive controllers for uncertain systems, aiming for real-time applicability and guarantees of constraint satisfaction and stability in closed-loop. We do so by integrating and extending concepts from data-driven and learning-based control, as well as robust and stochastic Model Predictive Control (MPC).

Contact: Johannes Teutsch

Related Publications:

- Kerz, S.; Teutsch, J.; Brüdigam, T.; Wollherr, D.; Leibold, M.: "Data-driven Tube-Based Stochastic Predictive Control.", IEEE Open Journal of Control Systems, vol. 2, pp. 185-199, 2023 [Link]

- Teutsch, J.; Kerz, S.; Wollherr, D.; Leibold, M.: "Sampling-based Stochastic Data-driven Predictive Control under Data Uncertainty.", arXiv:2402.00681, 2024 [Link]

- Teutsch, J.; Narr, C.; Kerz, S.; Wollherr, D.; Leibold, M.: "Adaptive Stochastic Predictive Control from Noisy Data: A Sampling-based Approach.", IEEE 63rd Conference on Decision and Control (CDC), 2024 [Link]

Active Fault Diagnosis and Control in Dynamic Systems

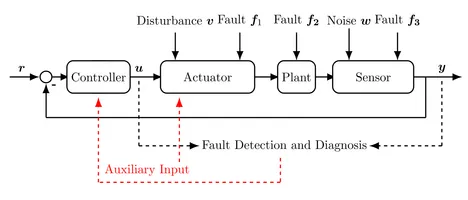

Our research explores new methods for detecting faults in dynamic systems, especially those that are not apparent during normal operation. When observed data do not provide enough information to distinguish between nominal and fault scenarios, we generate auxiliary input signals to identify different fault models (which may be known or unknown). This method is also known as active fault detection. The goal is to demonstrate the ability to simultaneously achieve control goals and model separation. To this end, we work on merging Model Predictive Control (MPC) methods with active fault detection methods. The method can be applied to many different systems and will contribute to the overall improvement of system reliability and safety by ensuring effective fault detection and management.

Contact: Annalena Daniels

Optimal Control for Crops in Vertical Farms

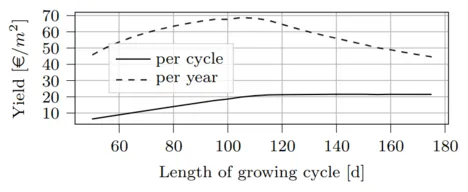

This research area focuses on enhancing the efficiency and productivity of vertical farming through optimal control strategies. Vertical farming offers year-round cultivation by maintaining optimal growing conditions, leading to faster crop maturity and higher yields than traditional farming. However, it faces challenges such as high energy consumption. The research aims to optimize the growth of different crops (e.g., wheat, tomatoes, etc.) by adjusting inputs like water, radiation, and temperature and by determining the optimal growth period duration to maximize yearly yields. By employing a nonlinear, discrete-time hybrid model, we seek to balance resource use, yield profit, and growth period, demonstrating the significant potential of control theory in improving vertical farming practices.

Contact: Annalena Daniels, Michael Fink

Related Publications:

- Fink, M.; Daniels, A.; Qian, C.; Velásquez, V.M.; Salotra S.; Wollherr D.: "Comparison of Dynamic Tomato Growth Models for Optimal Control in Greenhouses.", 2023 IEEE International Conference on Agrosystem Engineering, Technology & Applications (AGRETA), 2023 [Link]

- Daniels, A.; Fink, M.; Leibold, M.; Wollherr, D.; Asseng, S.: "Optimal Control for Indoor Vertical Farms Based on Crop Growth.", IFAC-PapersOnLine 56 (2), 9887-9893, 2023 [Link]

Previous Research Areas

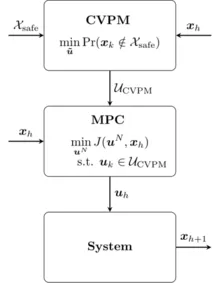

System uncertainty can be handled in different ways within MPC. Robust MPC, as the name indicates, robustly accounts for the uncertainty, often resulting in conservative solutions. While Stochastic MPC yields efficient solutions, a small probability of constraint violation is permitted based on a predefined risk parameter.

In contrast to Robust MPC and Stochastic MPC, we propose an MPC method (CVPM-MPC), which minimizes the probability that a constraint is violated while also optimizing other control objectives. The proposed method is capable of dealing with changing uncertainty and does not require choosing a risk parameter. CVPM-MPC can be regarded as a link between Robust and Stochastic MPC.

One promising application for the CVPM-MPC method is in the field of autonomous driving. In this context, the constraint violation probability directly correlates to the risk of collision, making the need for a method that minimizes this probability crucial.

Contact: Michael Fink

Related Publications:

- Brüdigam, T.; Gaßmann, V.; Wollherr, D.; Leibold, M.: "Minimization of constraint violation probability in model predictive control.", International Journal of Robust and Nonlinear Control 31.14, 2021 [Link]

- Fink, M.; Brüdigam, T.; Wollherr, D.; Leibold, M.: "Minimal Constraint Violation Probability in Model Predictive Control for Linear Systems.", IEEE Transactions on Automatic Control, 2021 [Link]

- Benciolini, T.; Fink, M.; Güzelkaya, N.; Wollherr, D.; Leibold, M.: "Safe and Non-Conservative Trajectory Planning for Autonomous Driving Handling Unanticipated Behaviors of Traffic Participants.", Accepted to the 27th IEEE International Conference on Intelligent Transportation Systems, 2024 [Link]

Social networks constituted by social agents and their social relations are ubiquitous in our daily lives. Dynamic processes over social networks, which are highly related to our social activities and decision making, are prominent research topics in both theory and practice.

In this research, two typical social-network-based dynamic processes, information epidemics and opinion dynamics, are inspected aiming at filling the gap between social network analysis and control theory. Analogous to epidemics spreading in population, information epidemics are introduced to describe information diffusion in social networks. Existence of the endemic and disease-free equilibria is thoroughly studied as well as their stability conditions. Additionally, the desired diffusion performance is achieved via an novel optimal control framework, which is promising to solve the open problem of optimal control for information epidemics. Apart from diffusion processes, opinion dynamics describing the evolution of individual opinions under social influence is inspected from control theoretical point of view. We focus on opinion dynamics over social networks with cooperative-competitive interactions and address the existence question: under what conditions, there exist certain kind of distributed protocols such that the opinions are polarized, consensus and neutralized. Particular emphasis is on the joint impact of the dynamical properties of (both homogeneous and heterogeneous) individual opinions and the interaction topology w.r.t. the static diffusive coupling protocols.

Contact: Yuhong Chen

Related Publications:

- Chen, Y.; Dai, X.; Buss, M.; Liu, F.: “Coevolution of Opinion Dynamics and Recommendation System: Modeling Analysis and Reinforcement Learning Based Manipulation.”, arXiv:2411.11687, 2024 [Link]

- Liu, F.; Chen, Y.; Liu, T.; Xue, D.; Buss, M.: “Distributed Link Removal Strategy for Networked Meta-Population Epidemics and Its Application to the Control of the COVID-19 Pandemic.”, IEEE Conference on Decision and Control (CDC), 2021 [Link]

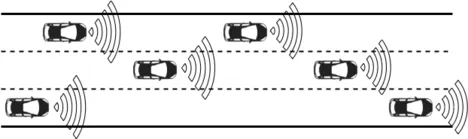

Autonomous vehicles face the challenge of providing efficient transportation while safely maneuvering in an uncertain environment. Uncertainties arise in various forms, mainly because the ego vehicle is unable to perfectly predict future motion of surrounding vehicles, cyclists, and pedestrians. For example, the ego vehicle must be prepared for a sudden vehicle lane change maneuver or unforeseen jaywalking pedestrians.

We develop Model Predictive Control (MPC) methods to advance automated driving. MPC effectively handles constraints that the vehicle must meet, e.g., lane boundaries, speed limits, and collision avoidance. We tackle uncertainties in the environment by applying Stochastic Model Predictive Control (SMPC). Accounting for all uncertainties may result in overly conservative driving, greatly limiting performance, especially in urban driving situations. In some scenarios, collision is even inevitable. In SMPC, constraints are adapted, yielding chance-constraints. They are not required to always hold, but a probability parameter is chosen that specifies the desired probability of constraint satisfaction, considering system uncertainty. In other words, a lower probability parameter allows for constraint violation that occurs more often, however, performance is increased.

In our research, we focus on safety and efficiency for automated vehicle trajectory planning with SMPC. By combining SMPC with failsafe trajectory planning, the advantage of optimistic vehicle behavior with SMPC is combined with the safety guarantees of failsafe trajectory planning. Further contributions include grid-based SMPC approaches, specifically accounting for maneuver uncertainty and maneuver execution uncertainty of vehicles. Multistage SMPC is also adopted to plan the motion of the EV explicitly differentiating between short-term and long-term decisions, each with its own required features and timescale.

Contact: Tim Brüdigam, Tommaso Benciolini

Related Publications:

- Benciolini, T.; Brüdigam, T.; Leibold, M.: Multistage Stochastic Model Predictive Control for Urban Automated Driving. 24rd IEEE International Conference on Intelligent Transportation Systems, 2021 [Volltext ( DOI )] [Volltext (mediaTUM)]

- Brüdigam, T.; Olbrich, M.; Wollherr, D.; Leibold, M.: Stochastic Model Predictive Control with a Safety Guarantee for Automated Driving. IEEE Transactions on Intelligent Vehicles, 2021, 1-1 [ Volltext ( DOI )]

- Brüdigam, T.; di Luzio, F.; Pallottino, L.; Wollherr, D.; Leibold, M.: Grid-Based Stochastic Model Predictive Control for Trajectory Planning in Uncertain Environments. 23rd IEEE International Conference on Intelligent Transportation Systems, 2020 [ Volltext ( DOI )] [Volltext (mediaTUM)]

- Brüdigam, T.; Olbrich, M.; Leibold, M.; Wollherr, D.: Stochastic Model Predictive Control with a Safety Guarantee for Automated Driving. 21st IEEE International Conference on Intelligent Transportation Systems, 2018 [Volltext ( DOI )] [Volltext (mediaTUM)]

- Brüdigam, T.; Olbrich, M.; Leibold, M.; Wollherr, D.: Stochastic Model Predictive Control with a Safety Guarantee for Automated Driving. 21st IEEE International Conference on Intelligent Transportation Systems, 2018 [Volltext ( DOI )] [Volltext (mediaTUM)]

Recent improvements on vehicle automation and communication systems in the real world provide a good basis for the study of multi-vehicle system. Stochastic Model Predictive Control (SMPC) is used to control autonomous vehicles because of its ability to deal with constraints in a non-conservative way. Current research about controlling autonomous vehicles by SMPC focuses on the behavior of an individual SMPC vehicle, and how the other vehicles react to the behavior of the SMPC vehicle is normally ignored. However, in real traffic, all vehicles tend to react to other vehicle in the environment.

In our multi-vehicle system, the interactions between vehicles are taken into consideration by introducing a distributed SMPC framework, where all vehicles are controlled by SMPC and able to react to other vehicles’ behaviors. Our current framework works well in most scenarios, but some cyclic traffic behaviors might occur in some scenarios. Here, cyclic behaviors mean that two vehicles want to occupy the road or let others occupy the road at the same time.

In the future, we would focus on finding methods to alleviate or even solve the cyclic behaviors.

Contact: Ni Dang

In human-machine interaction, the robot not only needs to satisfy physical constraints due to the limit on mechanical systems but also has to guarantee safety for itself and humans in its surrounding. Therefore, the robot has to evaluate available actions to be taken, interpret human motion with respect to possible tasks currently executed by the human, and select and execute its own action accordingly. Such a safe interaction also requires planning legible motions for the robot that can easily be understood by its human partner.

One of the key aims of this research is to develop novel motion planning methods to enable the robot to proactively interact with human partners. We aim to develop a probabilistic inference based continuous-time motion planning framework that incorporates future human actions and safety constraints for effective HRI in a human-robot assembly setup.

Contact: Salman Bari

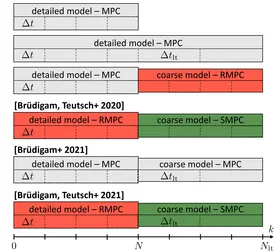

A long prediction horizon in MPC is often beneficial. However, a long prediction horizon with a detailed prediction model quickly becomes computationally challenging. We provide different adaptations to MPC in order to take advantage of long prediction horizons while keeping the computational effort manageable.

These adaptations are based on two ideas:

- A simple system model is used for long-term predictions (with a detailed short-term prediction model).

- The sampling time is increased along the horizon, resulting in a non-uniformly spaced MPC prediction horizon.

In addition, these adaptations are combined with methods from Robust MPC and Stochastic MPC to account for potential model uncertainty and disturbances.

Contact: Tim Brüdigam, Johannes Teutsch

Related Publications:

- Brüdigam, T.; Teutsch, J.; Wollherr, D.; Leibold, M.; Buss, M: Probabilistic model predictive control for extended prediction horizons. at - Automatisierungstechnik, vol. 69, no. 9, 2021, pp. 759-770 [Volltext (DOI)]

- Brüdigam, T.; Prader, D.; Wollherr, D.; Leibold, M.: Model Predictive Control with Models of Different Granularity and a Non-uniformly Spaced Prediction Horizon. American Control Conference (ACC), 2021 [Volltext (DOI)]

- Brüdigam, T.; Teutsch J.; Wollherr, D.; Leibold, M.: Combined Robust and Stochastic Model Predictive Control for Models of Different Granularity. 21st IFAC World Congress, 2020 [Volltext (DOI)] [Volltext (mediaTUM)]

Many physical systems are characterized by the hybrid phenomenon, namely, continuous state evolving and discrete mode switching. The challenges to design controllers for hybrid systems are uncertainties and switching behavior. If the uncertainty is very large or the system parameters vary, a single fixed controller may not be able to stabilize the whole system. In such cases, the uncertainty and varying parameters should be captured by learning approaches and the controller is required to be adaptive. Furthermore, the switching behavior increases the hazard to stabilize the whole system.

The goal of this work is to develop intelligent control approaches, which are also compatible with learning methods, for hybrid systems with uncertainties. Applications include robot impacts (walking, jumping, pick-and-place), soft robotics, flight control, etc.

Contact: Tong Liu

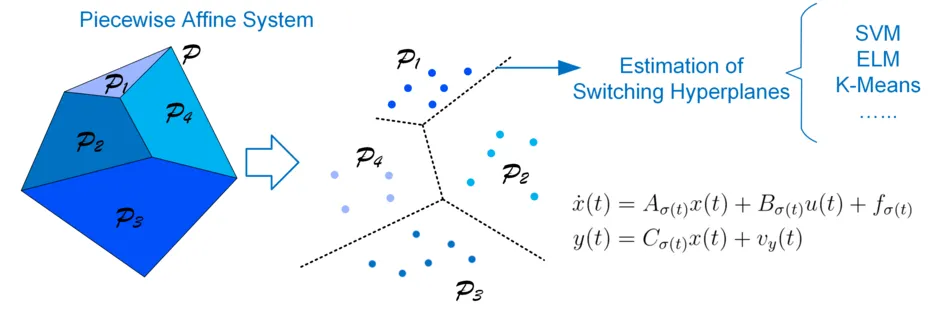

The hybrid system is a dynamical system that exhibits both continuous and discrete dynamic behavior. Piecewise affine system (PWA) is a special class of hybrid system which can describe systems with switching behavior. The switching signal of PWA system is not exogenous but depends on a partitioning of the state-input space. Thus the estimation of switching hyperplane is a critical point for the identification of the PWA system.

We plan to design an algorithm that can be utilized to estimate the switching hyperplane with the machine learning method, e.g. Support vector machine, Extreme learning machine. The algorithm will realize the estimation with less priori information of the system. like without the orders and number of the subsystems and use the state-input vectors without labels. After achieve the goal of estimating hyperplane of PWA systems, the algorithm will be extended to estimate other hybrid systems.

Contact: Yingwei Du

The control of robot manipulators has been well investigated in the past several decades, and it attracts considerable attention to address the robustness and tracking accuracy of the controllers. However, the challenges of this problem exist owning to the inherent nonlinear complexity, strong coupling between joints, external disturbances, and unmodeled uncertainties of the robot manipulators. As for the computed torque method, backstepping, and other model-based method, the tracking performance would be deteriorated by the external disturbances, such as friction. Obviously, some techniques, such as disturbance observer and neural network, are employed to identify the disturbances on-line, resulting in strengthening robustness of the controller. Nevertheless, some parameters should be identified on-line, which increases computational complexity.

In this research, we aim to develop the novel robust model-free control method to improve the tracking precision and achieve sub-optimal tracking performance when there exist unknown external disturbances and uncertainties. We would also take the states and controller constraints into consideration to guarantee safety of the system.

Contact: Yongchao Wang

Redundant robots offer many interesting opportunities in manipulation tasks. This redundancy comes with the mathematical problem of an infinite number of joint configurations to result in a desired end-effector pose. However, the performance capabilities of the robot highly relies on the joint configuration, especially when joint limits are considered. In this context we are not interested in finding only the single best possible joint configuration for a task, but on evaluating all possible solutions at once. Therefore we developed an analytical approach of finding a manipulability map for a specific 7dof serial robot configuration. Knowledge about this manipulability map can answer many interesting research questions e.g. optimal mounting pose of the robot, optimal end-effector configuration, optimal kinematic structure etc.

Contact: Gerold Huber

The main focus of this research area is set particularly on industrial assembly processes with mixed Human-Robot teams, which are typically well understood and defined in advance. In order to seamlessly interact with humans, an autonomous agent is required to use the basic rules of an ongoing task to plan ahead the individual possibilities each agent is granted and adapt actions on-the-fly. The main challenge when interacting with a human is that unlike robots, humans do not always follow the same sequence of actions even when a detailed plan is provided. This has thus to be incorporated in the planning, allocation and execution phase accordingly. Therefore, it is of utmost importance to analyze the mutual interference of each action. Thereby, the sequence of actions can be adjusted w.r.t. the human coworkers, unlike classic robot planning in which a robot follows a predetermined sequence of actions.

We propose an assembly planning and execution framework, which incorporates well understood methods of interactive game theory, optimal planning and multi-agent reinforcement learning to grant robots the ability of not only adapting, but to actually cooperate with human co-workers in joint assembly processes.

Contact: Volker Gabler

While learning techniques have achieved impressive performance in various control tasks, it is unavoidable that during the learning process, the intermediate policies may be unsafe and hence lead the system to dangerous behaviors. In literature, there are mainly two ways to impose safety in the learning algorithms. One is to modify the cost function, and the other is to guide the exploration process.

We aim to utilize the insights from invariance control and supervisory control to impose safety guarantees for learning-based controllers in event-driven hybrid systems. A supervisor is constructed based on the safe set from reachability analysis or invariance function, and this supervisor could guide the learning process to ensure that the system remain inside the safe region when the learning-based controller is searching for an optimal policy.

Contact: Zhehua Zhou