Online Speaker Recognition for Teleconferencing Systems

Master's Thesis, Martin Rothbucher |

This thesis describes an online speaker recognition as part of an immersive system for teleconferencing, developed at the Institute for Data Processing. With this conference system, it is possible to create an individual audiostream for every speaker in a conference room by using only a device on the conference table. The current active speaker in a teleconference is identified online.

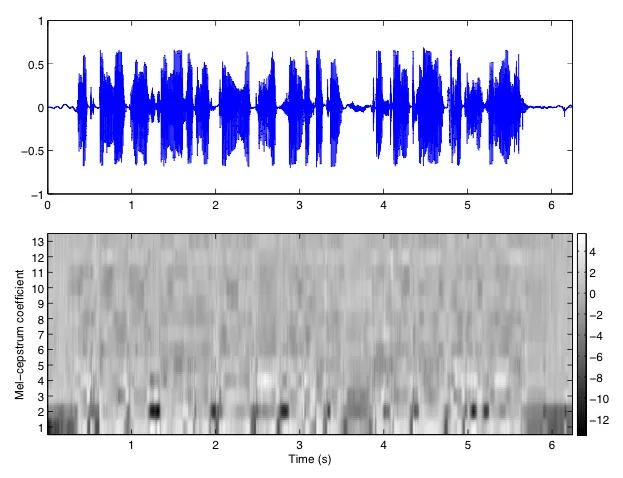

Therefore, a model of each conference participant is constructed by using short time spectral features. In the system approach Gaussian Mixture Models (GMMs) are applied for modeling individual speakers. Features are extracted from the incoming audio stream, constituting a likelihood score for every model and thus identifying the present speaker. Mel-frequency cepstral coefficients (MFCCs) are chosen as features to represent spectral attributes of different sounding voices. Due to the required low computational complexity of an online speaker recognition task, a Universal Background Model (UBM) in combina- tion with maximum aposteriori (MAP) adaptation is used to provide a very fast creation of speaker-dependent GMMs, with only few training data needed.