Manifold Optimization for Representation Learning

| Dozenten: | Julian Wörmann und Hao Shen |

| Assistenten: | |

| Zielgruppe: | Masterstudenten ab dem ersten Semester |

| ECTS: | 6 |

| Umfang: | (2 SWS Vorlesung / 2 SWS Übung) |

| Turnus: | Sommersemester |

| Anmeldung: | TUMonline |

| Zeit & Ort: | Mo + Mi jeweils 09:30 - 11:00 Uhr, Z995 |

| Beginn: | erste Vorlesung am 15.04.24 |

Content

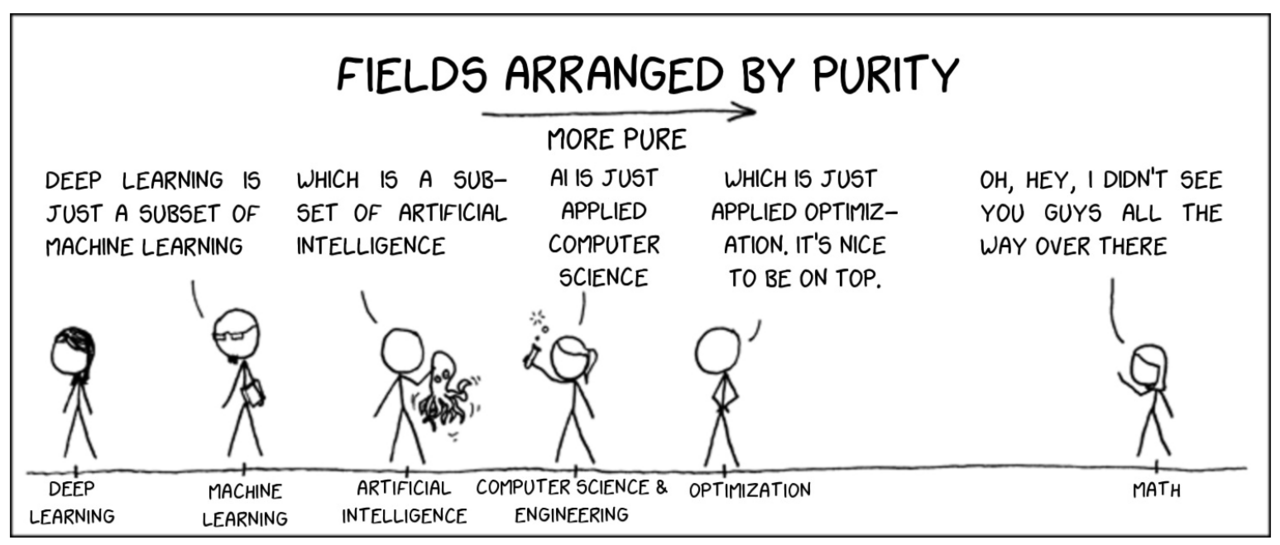

Learning proper representations from data is known to be a key for the success of modern machine learning algorithms. Most representation learning models require to solve an optimization problem constrained on some matrix manifold. In this course, we study the techniques of representation learning from the manifold optimization perspective.

We will cover the following paradigms of representation learning:

- Descriptive representation, e.g. Independent Component Analysis, Matrix Factorization, Dictionary Learning;

- Discriminative representation, e.g. Trace Quotient Analysis;

- Deep Representation, e.g. Multi-Layer Perceptron (MLP), Autoencoder.

Meanwhile, we will cover the following concepts of manifold optimization (not exclusively):

- Coordinate-free derivatives in finite dimensional Hilbert spaces;

- Geometry of unit sphere, Stiefel manifold, Grassmann manifold, etc.

- Gradient descent algorithms on Matrix Manifolds;

- Conjugate Gradient on Matrix Manifolds.

Miscellaneous

Required skills and experience:

Basic knowledge of linear algebra, statistics, optimization, and machine learning, as well as basic knowledge in Matlab or Python (or the motivation to learn it).

On completion of this course, students are able to:

- describe basic models of representation learning;

- explain the respective manifold constraints in the different representation learning paradigms;

- understand technical concepts in manifold optimization;

- derive simple manifold optimization algorithms to solve classic problems in representation learning;

- implement classic manifold optimization algorithms for different representation learning approaches.

Teaching methods:

The course consists partially of frontal teaching with black board and slides, but also of group and individual discussions to learn new definitions and concepts by means of simple examples. The tutorials consist of discussing the exercises and programming tasks and supporting the students in solving them.

The following kinds of media are used:

- Presentations

- Black board

- Exercises and course-slides available for download

Literature

- P.A. Absil, R. Mahony, R. Sepulchre. Optimization on Matrix Manifolds, Princeton University Press, 2008

- A. Hyvärinen, J. Karhunen, E. Oja. Independent Component Analysis, Wiley-Interscience, 2001

- C.C. Aggarwal. Neural Networks and Deep Learning: A Textbook, Springer 2018

- M. Elad, Sparse and redundant representations: from theory to applications in signal and image processing. Springer, 2010

Target Audience and Registration

The course is offered for "Elektrotechnik und Informationstechnik" and "Mathematics in Data Science".

The target audience are Master students starting from their first year.

Registration happens via TUMonline