We are consistently searching for motivated Students from Computer Science, Robotics, Electrical Engineering, and similar to work with us. We can offer topics suitable for a Bachelor's or Master's Thesis and internships or student jobs (Werkstudententätigkeit).

If you're interested and would like more information, please don't hesitate to contact Prof. Lilienthal or any chair member.

Master’s Thesis: Human Movement and Activity Models for Production Automation and Embodied AI

Human activity is a fundamental part of modern production environments. Real-time dynamic perception and interpretation of human activity patterns helps to optimize operations, increase workspace safety, conserve resources, and support efficient teaming with assistive robots. To that end, production automation hosts a wide array of methods for 3D perception and tracking of full-body poses, gazes and interactions with objects, motion prediction and activity recognition.

Jointly with Occurrence AB — a dynamic startup based in Stockholm and Munich — we offer several MSc thesis topics concerning human activity in production environments. This is a unique chance to tackle industrial-grade use-cases of production automation, work with real-world data and gain experience with deployed software and data frameworks.

Possible topics include:

- Accurate perception of human poses from multiple heterogeneous vision sources in the environment

- Open-vocabulary activity recognition using vision-language models

- Status detection (e.g. engagement or distraction) and dynamic task-oriented gestures interpretation

- Ground truth gaze data collection to improve the camera-based gaze estimation

- Training on large amounts of human movement data

- Mobile multimodal camera-lidar rig for ground truth 3D environment perception

Prerequisites:

- Strong machine learning and deep learning background

- Excellent programming skills in Python

- Preferable experience in PyTorch or TensorFlow

- Background in Informatics or Robotics (CIT School)

- Independent and self-motivated working

If you are interested, just send an email to andrey.rudenko(at)tum.de and karen.biehl(at)occurrence.se with a short CV and your grade report.

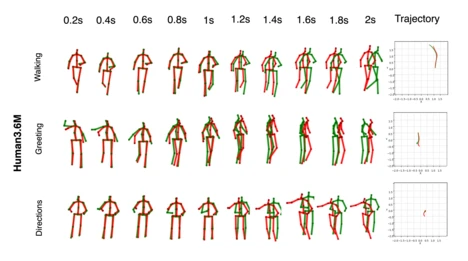

Master’s Thesis: Human Full-body Pose Modeling and Prediction

In order to safely navigate, interact and provide services, mobile robots should be able to detect, track and predict human motion. Understanding the full-body poses is specifically efficient to that end. Full-body pose describes the head orientation and attention, walking patterns, interactions with objects and many further useful aspects of human movement. Full body poses of the nearby humans are a key perception elements in many domains, ranging from delivery and automation to healthcare, automated driving and interactive systems.

In this thesis topic, we aim to develop algorithms for full-body pose prediction. Starting off with a detection and tracking pipeline, we will collect and investigate the diverse movement and interaction data in the target domain. Based on this analysis, we will develop methods for accurate movement and activity prediction.

Possible topics include:

- Multi-human data collection and interaction prediction

- Obstacle-aware pose prediction

- Fast short-term pose prediction from limited computing resources

- Incomplete (different length inputs) and variable frequency input handling for reliable pose prediction

- Robustness to partial or full occlusions in the input sequence

Prerequisites:

- Strong machine learning and deep learning background

- Excellent programming skills in Python

- Preferable experience in PyTorch or TensorFlow

- Background in Informatics or Robotics (CIT School)

- Independent and self-motivated working

If you are interested, just send an email to andrey.rudenko(at)tum.de and tim.schreiter(at)tum.de with a short CV and your grade report.

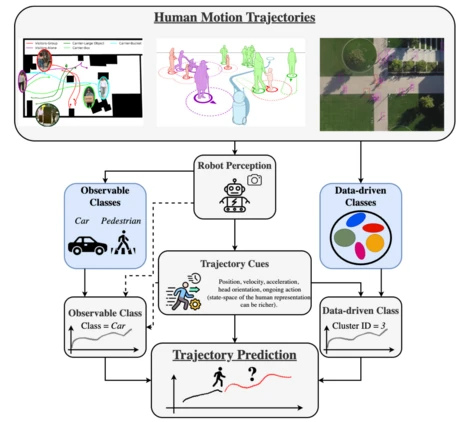

Master’s Thesis: Heterogeneous Human Movement Clustering and Prediction

Mobile and service robots operate in environments which are complex, stochastic and inherently dynamic, filled with human motion, interaction, activities and affordances. In order to safely navigate, avoid collisions, offer services and interact with their users, robots should be able to perceive and predict human motion. Traditional pipelines to that end include detection and tracking of each person’s position and full-body pose, and prediction of their positions and poses in the next few seconds.

Human movement is inherently heterogeneous, i.e. all people move differently due to their preferences, ongoing activities, physical abilities and constraints from the environment. This is evident in trajectory patterns, where distinct maneuvers exist, such as acceleration, turning or stopping. Prior art in motion prediction, however, largely ignores this heterogeneity, treating all movement as potentially equal, which leads to inaccurate predictions and loss of nuances in realistic settings.

This thesis will follow up on the strong results, achieved in the recent PhD work. The abundant available methodology includes data processing methods, visualization and analysis tools; trajectory prediction methods based on VAEs, GANs, LSTMs and transformer networks; dynamics patterns clustering using Time-Series K-means and Self-conditioned GANs.

Possible topics include:

- Time-variable Models of Human Movement Pattens

- Full-body Pose Movement Pattens

- Transfer of motion type detection and clustering techniques to other domains and moving entity types

Prerequisites:

- Strong machine learning and deep learning background

- Excellent programming skills in Python

- Preferable experience in PyTorch or TensorFlow

- Background in Informatics or Robotics (CIT School)

- Independent and self-motivated working

If you are interested, just send an email to andrey.rudenko(at)tum.de and tim.schreiter(at)tum.de with a short CV and your grade report.

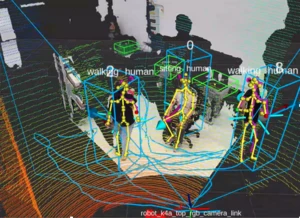

Master’s Thesis: Human Detection, Tracking and Activity Recognition

Mobile and service robots operate in environments which are complex, stochastic and inherently dynamic, filled with human motion, interaction, activities and affordances. In order to safely navigate, avoid collisions, offer services and interact with their users, robots should be able to perceive and predict human motion. Traditional pipelines to that end include detection and tracking of each person’s position and full-body pose, and prediction of their positions and poses

in the next few seconds.

Accurate human perception is a challenging topic due to the complex dynamic motion, occlusions, interactions with objects and other people, semantic properties of the environment etc. In this thesis we aim to address the challenges of human perception in domain-specific environments, considering for instance:

- Novel sensors: radar-based detection, thermal cameras, Wi-Fi-based state and activity perception

- Very long range position, orientation, pose and activity estimation

- Human pose and SMPL mesh recovery (e.g. from 3D lidar).

- 3D eye-gaze detection from RGB input

- Prediction-based 3D pose / mesh tracking refinement

Prerequisites:

- Strong machine learning and deep learning background

- Excellent programming skills in Python

- Preferable experience in PyTorch or TensorFlow

- Background in Informatics or Robotics (CIT School)

- Independent and self-motivated working

If you are interested, just send an email to andrey.rudenko(at)tum.de and tim.schreiter(at)tum.de with a short CV and your grade report.

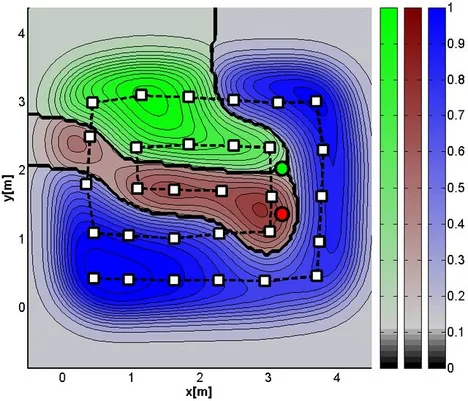

Master’s Thesis: Intelligent Sampling Strategy for Open Path Gas Sensing with Mobile Robots Using Reinforcement Learning

Gas leaks in industry or nature can harm humans, animals, and infrastructure. Finding the sources of an invisible, potentially hazardous gas can be even more dangerous for human workers. So, it sounds like a perfect job for robots! To avoid that the robot needs to get in touch with the gas, open-path laser-based sensors are the means of choice. These systems allow us to remotely measure the gas concentration between two robots.

Your Mission is to develop a strategy for navigating the robots to take remote measurements. This needs to be done in an intelligent way such that we can determine the locations of gas as fast as possible. Therefore, you will train the multi-agent system with reinforcement learning algorithms. To evaluate the sampling performance of your trained agents, you will compare it with other methods in terms of time and efficiency.

Prerequisites:

- Excellent programming skills in Python

- Preferable experience in PyTorch or TensorFlow

- Background in Informatics or Robotics (CIT School)

- Independent and self-motivated working

If you are interested, just send an email to marius.schaab@tum.de and thomas.wiedemann@tum.de with a short CV and your grade report.

Master’s Thesis: Multi-Agent Formation Planning for Gas Detection

Mobile robots or drones equipped with appropriate sensors are perfect platforms for sampling toxic or dangerous airborne trace substances or gases to avoid threads for human operators. In emergency scenarios, deploying multiple agents to reduce response times is further advantageous.

The goal of the thesis is to plan optimal formations and paths for a multi-agent system to increase the chance of detecting gas emitted from a source at an unknown location. The planning should incorporate environmental parameters, like wind, and model assumption of gas propagation in air.

Requirements:

- Excellent programming skills in Python

- Good knowledge of numerical methods (FEM, parameter estimation, optimization)

- Self-motivated working and a good working knowledge of English or German

- Student at CIT

The Master’s Thesis might be carried out in collaboration with the Swarm Exploration Group at the Institute of Communications and Navigation at the German Aerospace Center (DLR) in Oberpfaffenhofen.

If you are interested, please send an email to thomas.wiedemann@tum.de with a short CV and your grade report.

Bachelor's or Master's Thesis: Intelligent Signal Processing and Sampling Strategies for Gas Source Estimation in Robotic Applications

This thesis aims to explore advanced signal processing and data fusion for gas source localization (GSL) in robotic olfaction. The focus is on developing efficient algorithms to handle noisy, sparse sensor data. Candidates will gain hands-on experience in data processing, probabilistic modeling, and experimental validation.

Robotic olfaction is a fascinating yet underdeveloped field compared to well-established areas like computer vision or acoustics. Despite its immense potential for applications in environmental monitoring, industrial safety, and disaster response, the development of reliable and efficient robotic olfaction systems remains a significant challenge.

This thesis will focus on addressing research questions about intelligent robot olfaction. Robot olfaction problems, such as Gas Distribution Mapping and Gas Source Localization, are challenging in real-world applications, requiring robust methods to handle noisy and sparse sensor data in dynamic environments. In this thesis, the research will involve developing and implementing methods that can efficiently process temporal-spatial sensor data, fuse heterogeneous inputs (e.g., gas concentration and wind speed), and make probabilistic estimations of gas source locations.

Possible Research Directions

Depending on the student’s interests, we will support exploration of one or more of the following topics:

- Sensor Signal Processing: Develop algorithms for online calibration, drift compensation, or gas sensor modeling to improve the utility of gas sensor measurements.

- Gas Source and Distribution Estimation: Design or adapt algorithms (e.g., Bayesian inference, Gaussian processes) to estimate gas source locations or map gas distributions from sparse and noisy sensor measurements.

- Adaptive Sampling Strategies: Investigate intelligent sampling methods (e.g., active learning, reinforcement learning) to optimize the selection of measurement points, enabling efficient and accurate gas source localization.

Prerequisites

- Excellent programming skills in Python

- Preferable experience in PyTorch or TensorFlow

- Background in Informatics/Robotics (CIT School), Applied Mathematics, or Physics

- Independent and self-motivated working

If you are interested, please send an email to han.fan@tum.de with a short CV and your grade report.

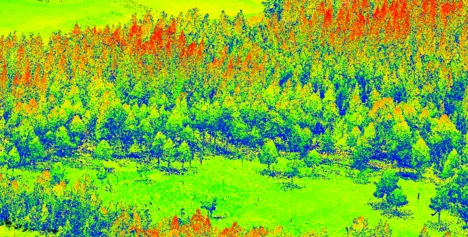

Master’s Thesis: Multi-Agent Path Planning for LiDAR Acquisition

In emergent search and rescue situations, time is of the essence. Systematic path optimization is essential. Laser scanners are a proven tool to gather 3D information about ground surfaces. Drones can be used to acquire essential data for mapping. By leaning on these compatible technologies, an optimized approach can be derived. Our goal is to find the most optimal path for a swarm of drones to gather necessary data in a search and rescue scenario.

Your task will be to investigate state-of-the-art path planning algorithms for a swarm of drones with the purpose of acquiring point cloud data. The solutions should be evaluated in simulations and in-situ.

Requirements:

- Strong proficiency in Python programming; experience with ROS/ROS2 is a valuable advantage

- Hands-on experience with or a keen interest in hardware implementations

- Familiarity with point cloud data and image processing

- Ability to work independently with a self-motivated approach

- Fluent in English

This thesis is in collaboration with the German Aerospace Center (DLR). If you are interested, please send an email to Sigrid.strand@dlr.de with a short CV and transcript.

Working Student (Part-Time) for Implementing LiDAR Sensors on a Drone Swarm

Are you passionate about cutting-edge drone technology and eager to make an impact?

Join our swarm exploration team at DLR as a working student and contribute to integrating LiDAR sensors into a swarm of drones. This is your chance to gain hands-on experience in robotics and sensor integration, while working on real-world applications that push the boundaries of autonomous systems.

As part of our development team, you will assist in implementing and optimizing LiDAR systems on drone swarms, enabling precise navigation, obstacle detection, and forest mapping. You’ll work on tackling both software and hardware challenges. Tasks include:

- Designing and integrating LiDAR sensor mounts and configurations for drones.

- Developing and testing algorithms for sensor data processing.

- Assisting with field tests to validate LiDAR performance in swarm operations.

- Documenting findings, results, and improvements for future development.

Requirements:

- Enrollment in a relevant field of study: Robotics, Computer Science, Electrical Engineering, or similar.

- Familiarity with LiDAR technology, sensor integration, and data processing.

- Strong proficiency in Python programming; experience with ROS/ROS2 is a valuable advantage

- Knowledge of drone systems and related communication protocols is a plus.

- Ability to work independently with a self-motivated approach

- Availability for at least 10 -18 hours per week.

- Fluent in English

If you are interested, please send an email to Sigrid.strand@dlr.de with a short CV and transcript.

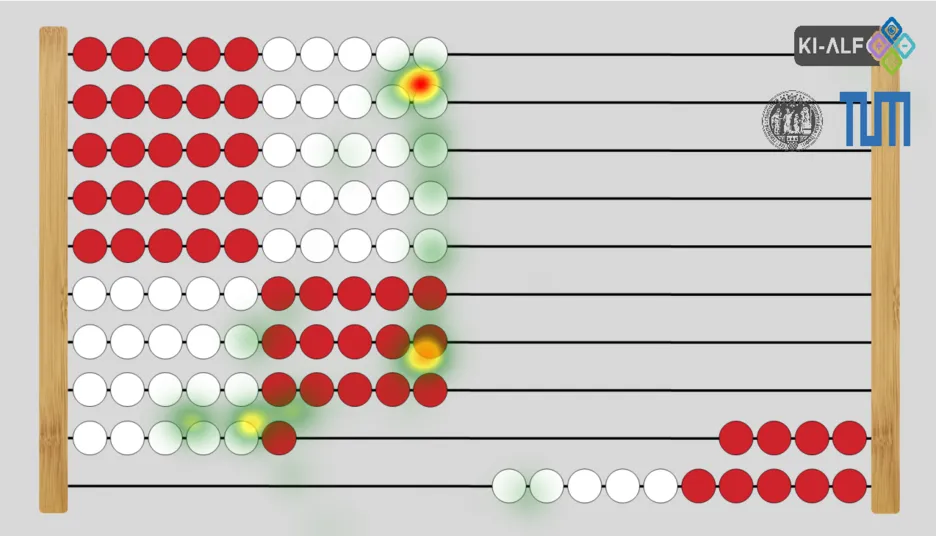

Explore the World of Eye Tracking

Visual perception plays a huge role in how we experience the world and make sense of information. It is often said that nearly 80% of sensory impressions reach the brain through vision, highlighting just how much we rely on what we see.

Eye tracking turns “where we look” into measurable data, revealing attention, cognitive effort, and learning behavior in real time. Interest in this field is growing quickly across education, psychology, and human–computer interaction because it offers a practical window into how people process information. This momentum is fueled by technology that is becoming more accurate, affordable, and accessible.

At our chair, we offer a range of topics for Master’s theses, Bachelor’s theses, and practical projects, including gaze prediction, eye tracking data analysis, and adaptive learning support systems. If you have your own idea, we are happy to help you shape it into a strong project. Depending on your focus, you can work with various setups, including screen-based trackers, eye tracking glasses, or webcam-based eye tracking.

Prerequisites:

- Strong programming skills in Python

- Background in AI or data analysis

- Interest in eye tracking and human-computer interaction

- Student at CIT

- Independent and self-motivated working

If interested, just email parviz.asghari(at)tum.de with a short CV and your grade report.

Master’s Thesis: Comprehensive Facial Data and Gaze Extraction Using iPhone Front Camera and ARKit

Face and eye-gaze tracking technologies have advanced significantly with the rise of augmented reality (AR) and computer vision applications. The iPhone's TrueDepth camera, combined with Apple’s ARKit, provides a powerful tool for capturing detailed facial data and gaze information in real time.

This research aims to explore the full potential of the iPhone front camera and ARKit for extracting various facial attributes, including:

- Face orientation (head pose tracking)

- Gaze estimation (eye-tracking and attention focus)

By leveraging ARKit's advanced face-tracking capabilities, our goal is to collect, analyze, and evaluate facial data under various conditions, exploring its potential applications in human-computer interaction (HCI) and accessibility solutions.

Your goal is to extract the facial features and robustly estimate the gaze direction and head orientation in a real-world scenario. The solutions should be evaluated using ground truth data from external eye-tracking devices or computer vision models.

Prerequisites:

- Experience with OpenCV, Python, and Swift.

- Knowledge of ARKit would be beneficial.

- Self-motivated and independent approach to work.

If you are interested, please send an email to tim.schreiter(at)tum.de and achim.j.lilienthal(at)tum.de with your CV and grade report.

Master’s Thesis: 3D Scene Reconstruction and Real-Time Vehicle Awareness using LiDAR from a mobile phone

Scene reconstruction and awareness of the environment are crucial in the fields of robotics, augmented reality (AR), mapping, and autonomous driving. With the integration of LiDAR sensors in smartphones, e.g., iPhone 12 Pro and later, 3D scanning is now possible without any expensive hardware. Furthermore, laser scanners can gather 3D information. This information is used to capture 3D point clouds to reconstruct a scene.

This research focuses on two key applications of mobile LiDAR:

- Scene Reconstruction – Capturing 3D point clouds to create accurate 2D and 3D visualization of the scene.

- Surrounding Awareness in Vehicles – Using LiDAR depth data, detect nearby vehicles and obstacles to improve driver assistance and autonomous systems.

Your goal is to acquire 3D point clouds and information about surrounding vehicles in the scene with respect to the ego-vehicle using LiDAR present in the iPhone. Then, the scene will be reconstructed, and vehicle awareness will be programmed.

This thesis is of 6 months duration.

If you are interested, please send an email to tim.schreiter(at)tum.de and achim.j.lilienthal(at)tum.de with your CV and grade report.

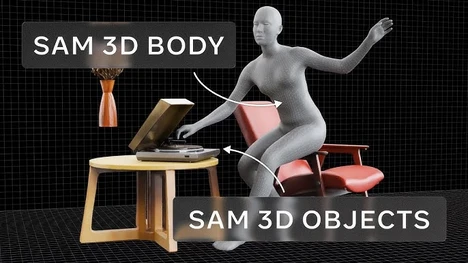

Master’s Thesis: Applying SAM3 for Object Classification and Tracking in Mobile Visual Data

Object classification and tracking are essential tasks in computer vision, with applications in robotics, surveillance, autonomous systems, and augmented reality. Recent advances in foundation models have led to powerful general-purpose vision systems capable of segmentation, tracking, and classification with minimal supervision.

Meta’s Segment Anything Model (SAM) and its newer version, SAM3, represent a major step forward in universal visual understanding. SAM3 is designed to perform robust segmentation and tracking across a wide variety of objects and environments, making it highly suitable for mobile vision tasks. When combined with mobile video data, SAM3 can enable flexible and efficient object understanding without task-specific retraining.

This research explores the application of SAM3 to mobile video data for object classification and tracking. The focus is on evaluating SAM3’s performance, adaptability, and limitations in real-world mobile scenarios such as dynamic scenes, occlusions, and changing viewpoints.

If you are interested, just send an email to tim.schreiter(at)tum.de with a short CV and your grade report.

Master’s Thesis: 3D Eagle Eye Visualization Using Mobile LiDAR and mobile video data

3D top-view (eagle eye) visualization is widely used in urban mapping, autonomous driving, surveillance, and augmented reality (AR). Traditional methods require drone-based LiDAR, satellite imaging, or expensive high-end sensors. However, with LiDAR-equipped smartphones (e.g., iPhone 12 Pro and later) and a mobile video, it is now possible to reconstruct a real-time top-down 3D view of the environment.

This research focuses on using mobile LiDAR and mobile video to generate a real-time 3D eagle-eye visualization, enabling users to view their surroundings from a top-down perspective. The system will convert LiDAR and video data into aerial-style 3D maps, which could be used for navigation, urban planning, and virtual reality applications.

Your goal is to reconstruct a 3D scene and generate a 3D eagle eye visualisation. Your tasks include:

- Investigating the state of the art techniques and collecting the data.

- Developing an ios application using ARKit and RealityKit to capture real-time LiDAR depth data and mobile video data

- Designing a fusion strategy to combine LiDAR and video data to generate a full 3D view of the surroundings.

- Reconstructing a 3D full mesh scene and implementing real-time rendering techniques for smooth visualization.

This thesis is of 6 months duration.

If you are interested, just send an email to tim.schreiter(at)tum.de with a short CV and your grade report.

Master’s Thesis: Leveraging iPhone ARKit Pose Estimation for Visual SLAM and Scene Understanding

Accurate camera pose estimation is a fundamental component of many computer vision and augmented reality applications, including visual SLAM (Simultaneous Localization and Mapping), 3D reconstruction, and spatial understanding. Traditionally, pose estimation relies on classical Visual SLAM pipelines that use feature detection, tracking, and bundle adjustment to estimate camera motion and scene structure.

Recent iPhones provide a powerful inbuilt pose estimation system through ARKit, which internally combines camera data, IMU measurements, and visual feature tracking to deliver robust real-time device pose estimates. Preliminary experiments indicate that ARKit’s pose estimation performs surprisingly well, even in challenging environments. Furthermore, ARKit exposes information such as feature points, camera transforms, and world mapping status, suggesting that a classical VSLAM-inspired approach is used internally.

This research aims to investigate how Apple’s ARKit pose estimation system can be used, analyzed, and potentially extended for computer vision and robotics-related applications. The work will evaluate ARKit’s accuracy, robustness, and suitability as a replacement or complement to traditional Visual SLAM pipelines.

If you are interested, just send an email to tim.schreiter(at)tum.de with a short CV and your grade report.

Master’s Thesis or Student Project: Development of a new and transferable robotic intent communication module

As modern Autonomous Mobile Robots (AMRs) move away from fixed paths and begin to navigate freely in shared spaces, their intended actions can become ambiguous to human workers. To bridge this communication gap, this thesis focuses on the improvement of the already existing concept of the Anthropomorphic Robotic Mock Driver (ARMoD) an interface character designed to communicate a robot's intent to its surroundings. The core mission is to transform current concepts into a transferable, scalable, and manufacturable module that can be easily integrated into various robotic platforms.

Core Focus Areas

The student will engage in a complete mechatronic design cycle, covering the following domains:

- Mechanical & Electrical Design: Creating anthropomorphic CAD geometries optimized for rapid prototyping (3D printing) and designing circuits for power management and actuator control

- Software & SDK Development: Implementing low-level firmware and high-level software libraries (Python/C++) to abstract hardware complexity, ensuring compatibility with standard robotics middleware like ROS2

- Analysis & Validation: Reviewing existing open-source robotics platforms and verifying the developed manufacturing and functional concepts.

Who Should Apply?

This project is highly adaptable and suitable for students from several disciplines:

- Mechatronics & Mechanical Engineering (Focus on CAD and hardware integration).

- Electrical Engineering (Focus on circuit design and power management).

- Computer Science (Focus on SDK development and ROS integration).

The work can be completed as an individual assignment or as a cross-disciplinary collaborative thesis.

If you are interested, just send an email to valentin.le-mesle(at)tum.de and tim.schreiter(at)tum.de with a short CV and your grade report.